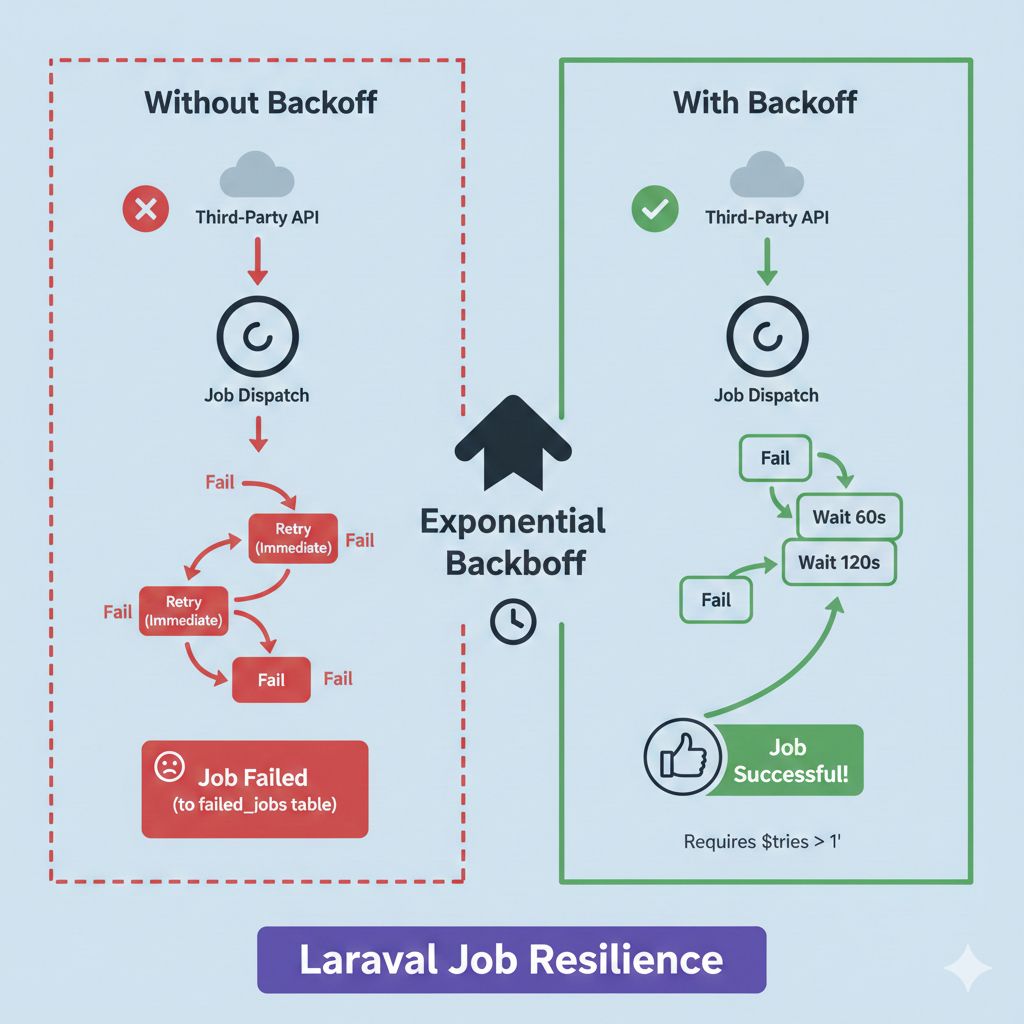

Ever had a Laravel job fail because an external API was down or hit a rate limit?

By default, Laravel is "one and done." If a job fails, it goes straight to the failed_jobs table. We can fix this by adding a public tries property (e.g., public $tries = 3) in the job class, but there’s a catch.

If you only use tries, Laravel retries the job immediately. If you're hitting a rate limit or the external service is down, retrying 1 second later will just fail again. You’ll burn through all your attempts in seconds, and the job will still fail.

The Fix: Use Exponential Backoff

To actually give the API time to breathe, you need the $backoff property. This tells Laravel to wait between attempts.

public $tries = 3;

public $backoff = [20, 60, 120];

How this works in the real world:

- 1st Fail: Laravel waits 20 seconds.

- 2nd Fail: Laravel waits 60 seconds.

- 3rd Fail: Laravel waits 120 seconds.

This gives the external service time to recover or your rate limit time to reset before the job tries again.

Quick Note: Remember that $backoff doesn't do anything unless you actually have $tries set to more than 1. You can’t wait for a retry if you aren't retrying!

It’s a small change, but it makes your background tasks way more resilient. 🚀